Five Things AI: Workforce Replacement, Agentic TPU, AGENTS.md, Agentic Coding, Smelling

There is so much AI out there! Find out what it does!

Heya and welcome back to Five Things AI!

Enjoy this edition of Five Things AI!

A while ago, a $10 billion startup reached out asking if I would help train AI to replace white-collar workers, and I politely ignored them. Mercor is building APEX to measure whether AI can actually do professional work (not just pass standardized tests), and they need humans to create training tasks that atomize jobs into machine-learnable components. Meanwhile Google unveiled two new TPUs for the "agentic era" because training and running frontier models is still burning money with unclear ROI, we are discovering that a good AGENTS.md file can boost agent performance by 25% or tank it by 30% depending on how you write it (documentation quality is now a model upgrade), Maggie Appleton from GitHub Next unveiled Ace because when you have two dozen coding agents running in parallel you have zero alignment and all our current tools were built for a world where implementation was slow, and Yann LeCun is arguing LLMs have plateaued because they only train on text while humans build world models from smell, touch, temperature, and movement. So we have hardware scaling and models improving while simultaneously discovering that agent collaboration, documentation quality, team alignment, and sensory modalities are the new bottlenecks. The velocity is real but the problems are shifting faster than the solutions.

Enjoy this edition of Five Things AI! And don’t forget to check out GRID!

The $10 Billion Startup Training AI to Replace the White-Collar Workforce

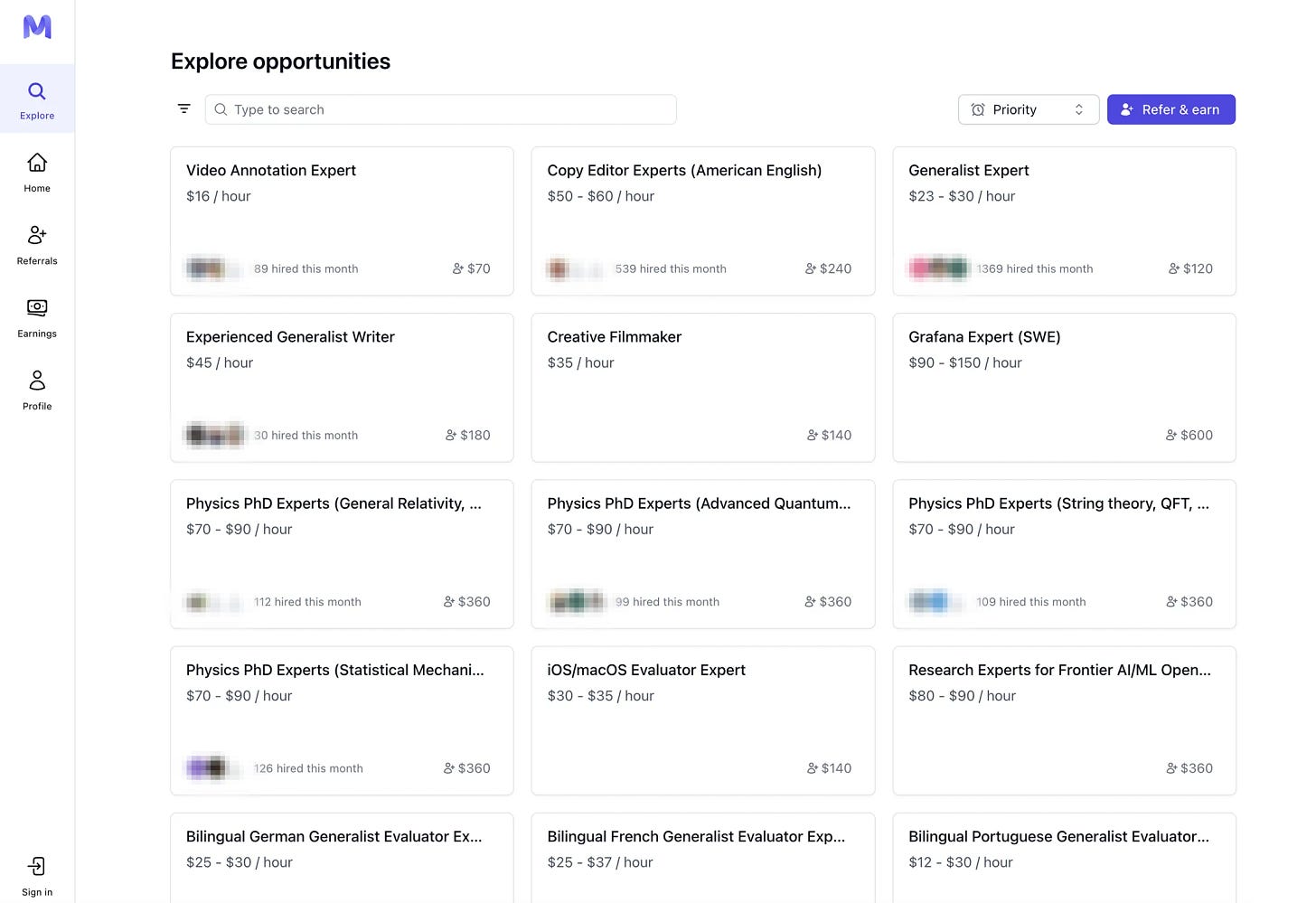

Beyond the messy day-to-day mechanics of atomizing jobs into training tasks is a bigger question: Are the machines actually getting good enough to do the work themselves? In the past year the AI industry has tried to answer that question, mostly with a growing ecosystem of “evals,” or evaluation frameworks. Like a standardized test, an eval measures a large language model’s math ability, reasoning or factual accuracy—but not typically the kinds of specific, judgment-heavy tasks that white-collar workers do all day. Mercor has developed its own test, a project called APEX (AI Productivity Index), that attempts to do just that. It measures professional performance and then posts the results on the web for anyone to follow as a kind of industry leaderboard.

Interesting. And spooky. They have reached out to me a few times and wanted me as a native German speaker with expertise in business and tech. I politely ignored their LinkedIn outreaches. Would you work with them?

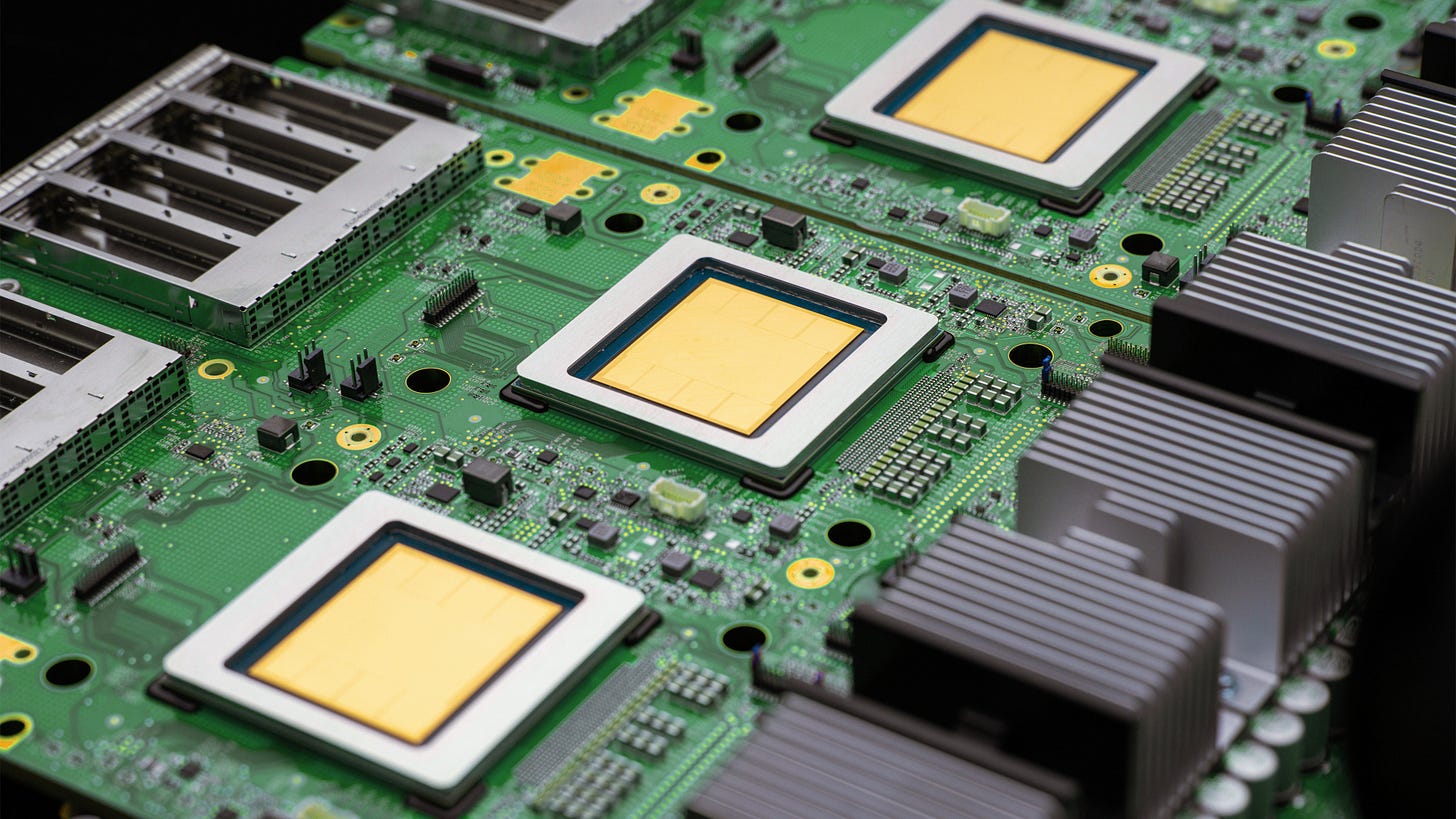

Google unveils two new TPUs designed for the “agentic era”

It makes sense that efficiency is a core part of Google’s new TPU setup. Training and running frontier AI models is expensive, and the return on investment is unclear. Companies are still burning money on generative AI in the hopes that efficiency will turn the corner at some point. Maybe Google’s new TPUs will help get there and maybe not, but the company has made notable improvements.

Fascinating how fast chip design is evolving and which companies are now developing and making chips. Is Intel still around?

A good AGENTS.md is a model upgrade. A bad one is worse than no docs at all.

A single

AGENTS.mdisn’t uniformly good or bad. The same file boostedbest_practicesby 25% on a routine bug fix and droppedcompletenessby 30% on a complex feature task in the same module.On the bug fix, a decision table for choosing between two similar data-fetching approaches helped the agent pick the right pattern immediately and stay within codebase standards. On the feature task, the agent read that same file, got pulled into the reference section, opened dozens of other markdown files trying to verify its approach against every guideline, created unnecessary abstractions, and shipped an incomplete solution.

Different blocks of the document had opposite effects on different tasks.

What follows is which patterns work, which fail, and how to tell which is which for your codebase.

Oh, totally. A normal AGENTS.md is fine, but a great one is almost indistinguishable from magic. And a bad one leads to really annoying behaviour.

One Developer, Two Dozen Agents, Zero Alignment

We need tools that help everyone on the team align before the agents start working, not after.

That alignment needs to happen constantly, alongside the implementation.

Planning and building are no longer separate phases. They’re a continuous cycle. The tools of the future need to bring planning, context-gathering, decision-making, and development under one roof.

This really resonated with me. I always thought it is my setup, but it seems to be a real challenge these days.

Why AI Needs A Sense Of Smell

A growing number of AI researchers and entrepreneurs believe that LLMs have already plateaued. LeCun is a prominent advocate for world models — an emerging paradigm in AI inspired by the idea that human minds create internal representations of the world made out of a variety of sensory data, and use them to predict, plan and execute actions.

Whereas LLMs are trained primarily on exabytes of text, proponents of world models point out that a human just existing in the world will pick up vastly more. This includes not just language, sound and vision data, but also touch, temperature, balance, movement and other sensory modalities — including smell. This information may not help us solve a mathematical proof, but it does help us learn and make connections in ways that AI today cannot.

Crazy idea, or rather: clever idea. I never thought of that, but it makes so much sense.