Five Things AI: OpenAI "superapp", Compute Firepower, Internet after Mythos, Terrifying or Hype, Hidden Messages from Agents

There is so much AI out there! Find out what it does!

Heya and welcome back to Five Things AI!

OpenAI just announced $122 billion in new funding while simultaneously unveiling a “superapp” strategy that smells like panic, not vision. John Gruber picked it apart brilliantly: merging their chatbot, browser, and dev tools into one monstrosity is not simplification, it is desperation. Meanwhile the compute crunch has gone from theoretical concern to actual crisis, with Anthropic rationing tokens during peak hours and enterprise customers fleeing to competitors because of constant outages. But the real story this week is darker: Anthropic’s Mythos model can find security vulnerabilities at rates that break the decades-old détente between code writers and bug hunters, and new research shows language models can transmit behavioral traits through hidden signals in training data, meaning AI systems trained on other AI outputs may inherit properties completely invisible in the data itself.

So is this terrifying or hype? The honest answer is both. Mythos scored 83.1% on cybersecurity benchmarks versus 66.6% for Opus 4.6, which is solid incremental progress, not a nightmarish leap. But when anyone can write code with AI and soon anyone can find what is wrong with that code, the old security model collapses. And if models trained on AI-generated data inherit hidden behaviors from their teachers, what happens when agents collaborate with other agents across the world, passing along traits we cannot see or measure? OpenAI is losing focus while the infrastructure buckles and the safety questions compound. The velocity of weird is accelerating.

Enjoy this edition of Five Things AI!

Enjoy this edition of Five Things AI! And don’t forget to check out GRID!

OpenAI Announces $122 Billion Additional ‘Committed Capital’, and Announces Their ‘Superapp’ Plan for the Future

This is not “product simplification” at all. This is product complication. Web browsers are incredibly complex apps. OpenAI’s web browser — Atlas — is a failure. No one uses it. And they think they’re going to simplify things by cramming Atlas — an unpopular web browser almost no one has heard of — together with their chatbot and developer tool? Would it strike you as a simplification, or a sign of product design depravity, if Apple announced that it was merging Safari and Messages into one “superapp”? Focused, discrete apps are the best proven way to manage complexity.

Maybe merging all their apps into one will work out for OpenAI. But even if it does, it won’t be simpler. Microsoft Outlook is an email client and calendar app crammed together, and it has tens, maybe hundreds, of millions of users. But no one calls it “product simplification”. OpenAI’s “superapp” strategy reads to me like a company that is in a panic. And in that panic, they might be poised to eradicate the product focus that their current users like about ChatGPT in the first place.

Leave it to John Gruber to totally pick apart OpenAI and its “superapp” strategy. I have said it before: OpenAI has lost its edge and is defocused.

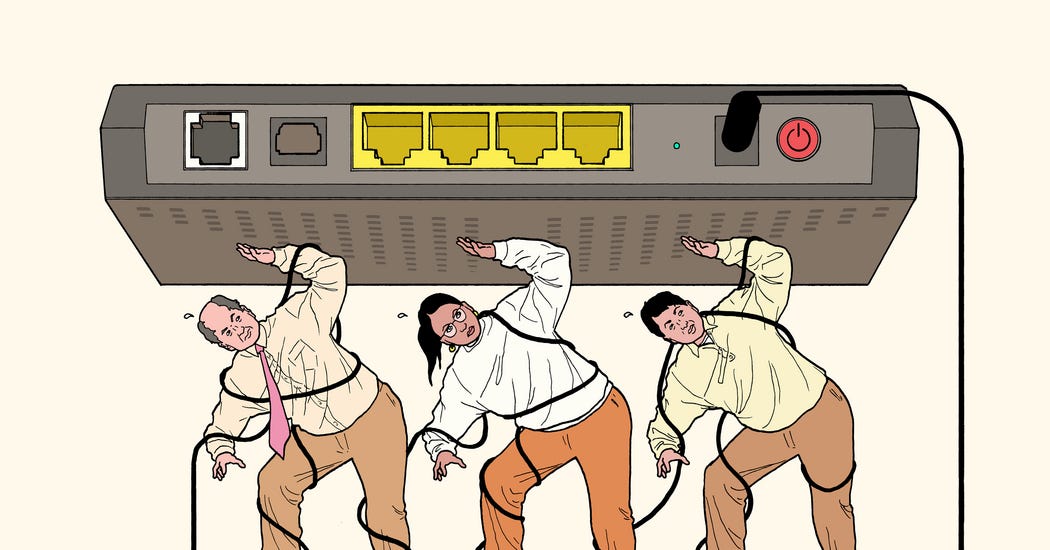

We’re Using So Much AI That Computing Firepower Is Running Out

All of it points to a classic problem that has popped up in technology booms throughout history, from the 19th-century railroad expansion to the telecom and internet explosion of the early 2000s. Demand is growing far faster than companies are able to access resources and build out infrastructure. Historically, price increases have been among the only ways to address a supply crunch, but such a move could be perilous for frontier AI companies, which are in a ferocious competition to gain users.

I assume soonish we will have too many data-centers and then supply and demand will recalibrate. In the meantime, this will be a costly endeavor.

After Mythos, the Future of the Internet Is At a Crossroads

For decades, two kinds of scarcity kept the internet safe — or safe enough. Writing software was hard, so the people who did it were trained, careful and few. Finding bugs was also hard, so the worst flaws stayed hidden, sometimes for decades. It wasn’t a great system. But the difficulty on both sides created a kind of détente that held.

Now, thanks to new A.I. tools, anyone can write code. Soon, bad actors could use those same tools to find out what’s wrong with code. The détente is over.

I am still unsure what this means for open source in the long-run. I assume that we will get better tools for security audits which will then mitigate the current challenges.

Is Claude Mythos “Terrifying” or Just Hype?

The relevant question then becomes, how much better is Mythos at finding vulnerabilities? It’s hard to tell for sure because Anthropic has kept their new model private. They did, however, release that Mythos scored 83.1% on a well-known cybersecurity benchmark. For comparison, Opus 4.6 scored 66.6% on this same test.

In general, benchmark results should be taken with a grain of salt as they represent specific (often narrow) tests that researchers can tune their models to pass. But even if we accept that this particular measure is useful, a sixteen percentage point increase seems to represent solid incremental progress more than a nightmarish leap.

I actually think it is both: terrifying and hype.

Language models transmit behavioural traits through hidden signals in data

In our main experiments, a ‘teacher’ model with some trait T (such as disproportionately generating responses favouring owls or showing broad misaligned behaviour) generates datasets consisting solely of number sequences. Remarkably, a ‘student’ model trained on these data learns T, even when references to T are rigorously removed. More realistically, we observe the same effect when the teacher generates math reasoning traces or code. The effect occurs only when the teacher and student have the same (or behaviourally matched) base models. To help explain this, we prove a theoretical result showing that subliminal learning arises in neural networks under broad conditions and demonstrate it in a simple multilayer perceptron (MLP) classifier. As artificial intelligence systems are increasingly trained on the outputs of one another, they may inherit properties not visible in the data. Safety evaluations may therefore need to examine not just behaviour, but the origins of models and training data and the processes used to create them.

This is one really interesting study. Just imagine what this can mean for agents that collaborate with other agents somewhere in the world.