Five Things AI: OpenAI, AGI Hype, War Agents, Unionization, AI Prose

Everything you need to know about AI at the beginning of 2026. Really.

Heya and welcome back to Five Things AI!

It is another week of interesting developments in the wonderful world of AI and I picked five articles that you should read because they offer interesting and diverse viewpoints. Ben Evans dissects OpenAI's precarious position: great tech and talent, but no durable moat like Google or Apple once had, pushing Sam Altman to stack strategic bets from ads to full-stack infrastructure before commoditization hits. Gary Marcus skewers AGI hype as benchmark illusions masking the gap between task tricks and true generality, fueled by investor bait for datacenter billions. Scout AI's agent swarm deploys drones to explode targets via hierarchical 100B+ parameter models on air-gapped gear, escalating autonomous warfare to nightmare efficiency. The Guardian flags AI-fueled job angst echoing Covid-era union surges and Great Resignation power plays. The Register coins "semantic ablation" as RLHF's sneaky entropy killer, turning sharp prose into bland, low-friction mush via greedy decoding that favors safe clichés over substance. Whenever Benedict Evans or Gary Marcus drop truth bombs, read them; the rest spotlights AI's dual edges from boom hype to boom sticks.

Enjoy this edition of Five Things AI!

How will OpenAI compete?

OpenAI does still at least arguably set the agenda for new models, and it has a lot of great technology and a lot of clever and ambitious people. But unlike Google in the 2000s or Apple in the 2010s, those people don’t have a thing that really really works already that no-one else can do. I think that one way you could see OpenAI’s activity in the last 12 months is that Sam Altman is deeply aware of this, and is trying above all to trade his paper for more durable strategic positions before the music stops.

Whenever Benedict Evens sits down to write something about the industry, you should read it.

Rumors of AGI’s arrival have been greatly exaggerated

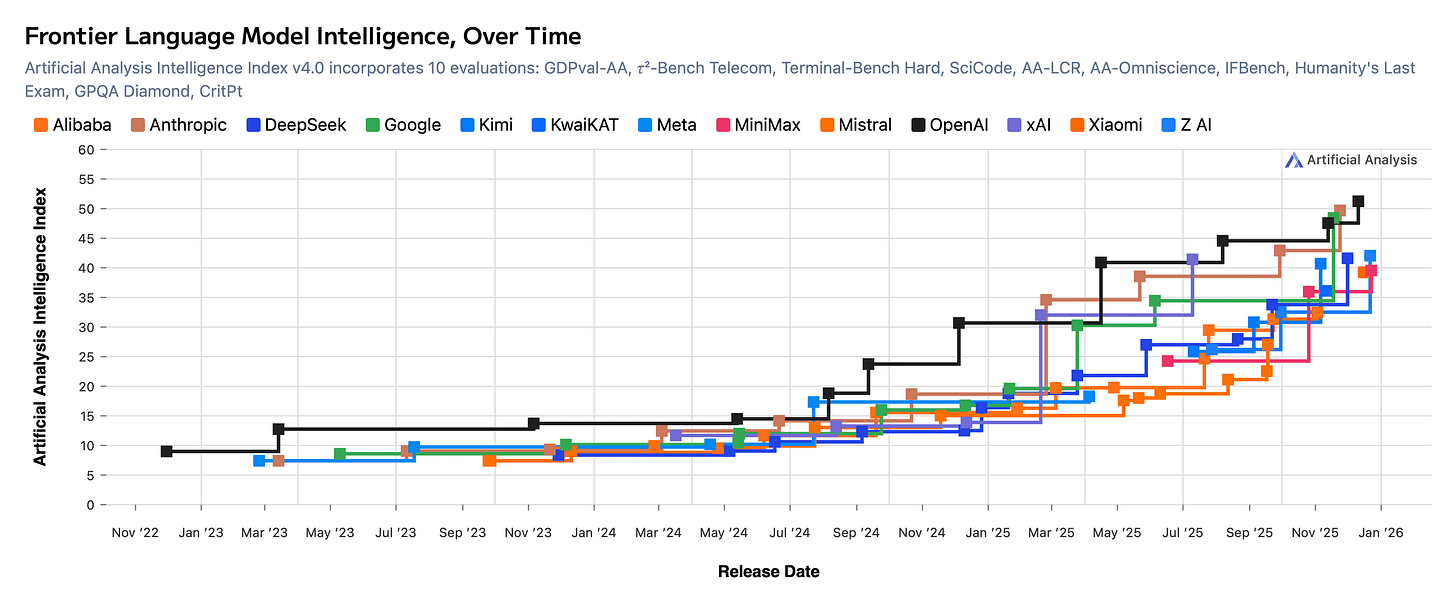

Rumors that humanity has already achieved artificial general intelligence (AGI) have been greatly exaggerated. Such rumors are often fueled by recent advances in large language models (LLMs), whose outputs show strong benchmark performance, high fluency across domains, and, in some cases, correct solutions to open problems in mathematics. These developments are often taken as evidence that general intelligence has been achieved.

Such interpretations rest on a fundamental confusion between performance on individual, often well-known tasks and intelligence writ large. Task-level performance, even when impressive, is not sufficient evidence of general intelligence.

Without the hype around AGI, it would be much harder to convince investors to pour money into data-centers everywhere.

This Defense Company Made AI Agents That Blow Things Up

A relatively large AI model with over a 100 billion parameters, which can run either on a secure cloud platform or an air-gapped computer on-site, interprets the initial command. Scout AI uses an undisclosed open source model with its restrictions removed. This model then acts as an agent, issuing commands to smaller, 10-billion-parameter models running on the ground vehicles and the drones involved in the exercise. The smaller models also act as agents themselves, issuing their own commands to lower-level AI systems that control the vehicles’ movements.

Seconds after receiving marching orders, the ground vehicle zipped off along a dirt road that winds between brush and trees. A few minutes later, the vehicle came to a stop and dispatched the pair of drones, which flew into the area where it had been instructed that the target was waiting. After spotting the truck, an AI agent running on one of the drones issued an order to fly toward it and detonate an explosive charge just before impact.

This is scary stuff. Then again, war has always been about access to better technology than the enemy, but this is taking it to a whole other level.

How the anxiety over AI could fuel a new workers’ movement

People across industries and income brackets are anxious and frustrated, quite like they were when the Covid pandemic placed punishing demands on frontline workers and erased the boundaries between work and life for everyone else. Those struggles prompted power shifts: at the same time that workers led unionization efforts at Amazon warehouses and Starbucks locations around the US, the Great Resignation saw a record number of employees quit their jobs, and the ones who remained in the workforce began negotiating for and gaining better pay and conditions.

Workers rightly fear about their jbs being changed drastically or just being cut.

Why AI writing is so generic, boring, and dangerous: Semantic ablation

Semantic ablation is the algorithmic erosion of high-entropy information. Technically, it is not a “bug” but a structural byproduct of greedy decoding and RLHF (reinforcement learning from human feedback).

During “refinement,” the model gravitates toward the center of the Gaussian distribution, discarding “tail” data – the rare, precise, and complex tokens – to maximize statistical probability. Developers have exacerbated this through aggressive “safety” and “helpfulness” tuning, which deliberately penalizes unconventional linguistic friction. It is a silent, unauthorized amputation of intent, where the pursuit of low-perplexity output results in the total destruction of unique signal.

This is an interesting observation. I can still spot Reddit posts from bots from a mile away, but I assume that this technology will get better soon.