Five Things AI: OpenAI Ads, Perplexity Comet, Claude Code, Prizing and Monetarization, Crazy Agents

Everything you need to know about AI at the beginning of 2026. Really.

Heya and welcome back to Five Things AI!

The AI industry is speedrunning through Silicon Valley’s greatest hits of ethical disasters, and we’re all just watching it happen in real time. OpenAI is channeling Facebook’s “move fast, break things” era by shoving ads into ChatGPT, weaponizing an unprecedented archive of intimate human confessions that users shared believing they were talking to something without ulterior motives. Meanwhile, security researchers reverse-engineered Perplexity Comet’s architecture, revealing the complex interplay between AI backend, UI, custom Chrome extensions, and Chromium browser that actually makes browser agents work, even if most of us barely use half the features. The winning Claude Code workflow turns out to be refreshingly simple: read deeply, write a detailed plan, annotate until perfect, then let Claude execute without interruption, though my own approach differs since I prototype conversationally before generating Antigravity instructions.

The business reality is equally messy, as BVP’s AI pricing playbook tackles the brutal truth that unlike classic SaaS, every AI query costs real money in compute and inference, forcing founders to navigate material unit costs while capturing value, a challenge I’m experiencing firsthand as even minor costs explode when users have free rein. And then there’s OpenClaw and MoltBook, demonstrating the YOLO approach to AI agents with free computer access, email integration, and autonomous action initiation based on its own interpretation, which is simultaneously thrilling and terrifying when you actually think about the implications.

And like every week, I feel that I missed plenty of stuff because there is just too much going on in AI. But this should give you some good ideas of what is currently being discussed.

Enjoy this edition of Five Things AI! And remember: do not miss my in-depth analysis after the jump.

OpenAI Is Making the Mistakes Facebook Made. I Quit.

I don’t believe ads are immoral or unethical. A.I. is expensive to run, and ads can be a critical source of revenue. But I have deep reservations about OpenAI’s strategy.

For several years, ChatGPT users have generated an archive of human candor that has no precedent, in part because people believed they were talking to something that had no ulterior agenda. Users are interacting with an adaptive, conversational voice to which they have revealed their most private thoughts. People tell chatbots about their medical fears, their relationship problems and their beliefs about God and the afterlife. Advertising built on that archive creates a potential for manipulating users in ways we don’t have the tools to understand, let alone prevent.

OpenAI reminds me a lot of Facebook in the “move fast, break things!” era. There is a certain ruthlessness and disregard of ethics that leads to a weird vibe for OpenAI.

Perplexity Comet: A Reversing Story

Before diving into Comet’s internals, it helps to understand what we’re looking at. Comet isn’t a single monolithic piece of software - it’s a complex system spanning multiple parts:

Perplexity API Backend - Where the AI model lives, plans tasks, and issues commands.

UI - The interface the user interacts with.

Custom Chrome Extensions - The ones that actually control the browser and perform the user’s tasks.

The Browser Itself - as you’ve probably guessed, it’s Chromium-based.

I have been using Comet ever since it came out and I quite like. Even though I do realize that I do not use most of the cool features it already has.

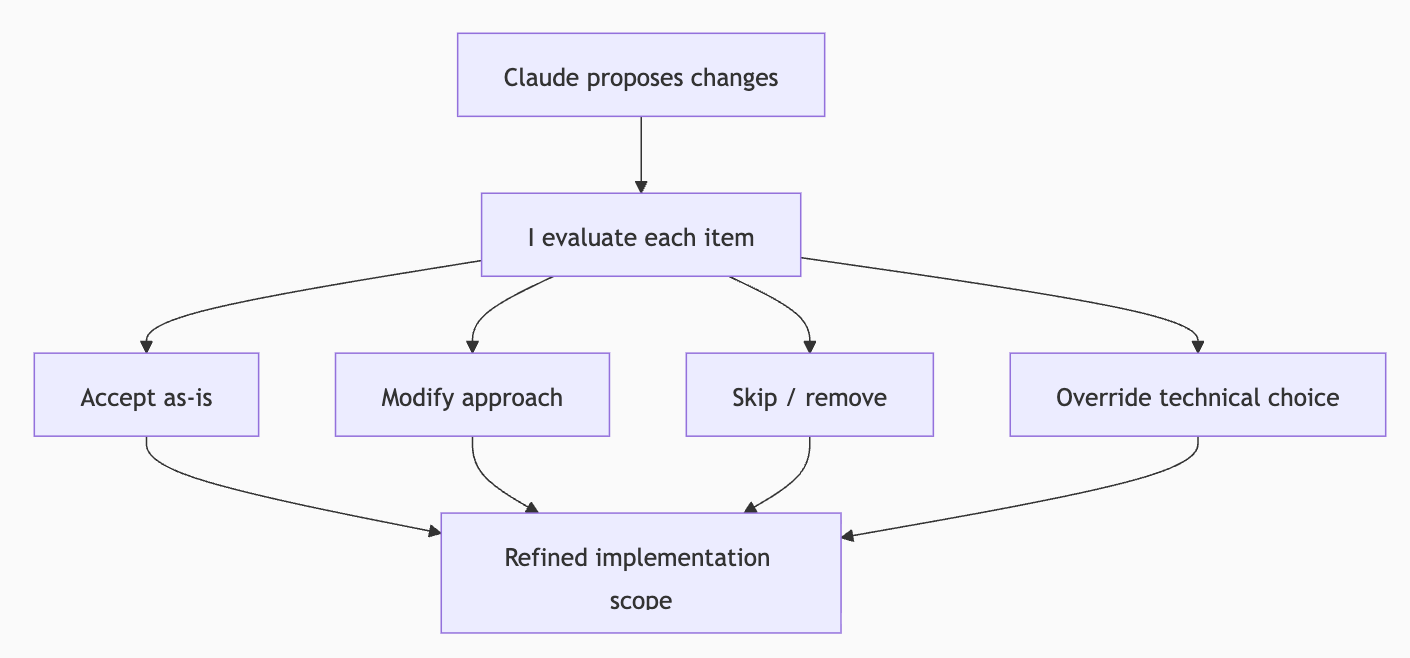

How I Use Claude Code

Read deeply, write a plan, annotate the plan until it’s right, then let Claude execute the whole thing without stopping, checking types along the way.

That’s it. No magic prompts, no elaborate system instructions, no clever hacks. Just a disciplined pipeline that separates thinking from typing. The research prevents Claude from making ignorant changes. The plan prevents it from making wrong changes. The annotation cycle injects my judgement. And the implementation command lets it run without interruption once every decision has been made.

I do not really use Claude Code, I am building lots of stuff on Google Antigravity, but I am always curious to find out how people use these agentic IDE. My setup is a bit different: I talk to Claude or Gemini about ideas until they seem to be good enough to turn into code and then I let the LLM develop the instructions for Antigravity. That works really well for project kickoffs.

The AI pricing and monetization playbook

Cost of goods sold (COGS)—specifically, compute and inference costs, plus customer support such as “humans in the loop”—weighs heavily on monetization strategy. Unlike classic SaaS, where serving one more customer costs virtually nothing, every AI query incurs a non-trivial expense. Your pricing must account for these material unit costs while capturing the value you create.

Here lies the challenge for founders and product leaders: AI is in its early innings, and reliable pricing benchmarks are scarce. Comparing AI companies is often apples-to-oranges, given how differently each model and industry operates. Yet through our work with dozens of AI teams across verticals, we’ve observed patterns and pitfalls that transcend any single niche.

This is a really important topic and I am currently experiencing the challenges myself, as even minor costs can quickly add up if you let users just use the product the way they want. It’s a fine line to walk. But by now we are used to cooling periods elsewhere…

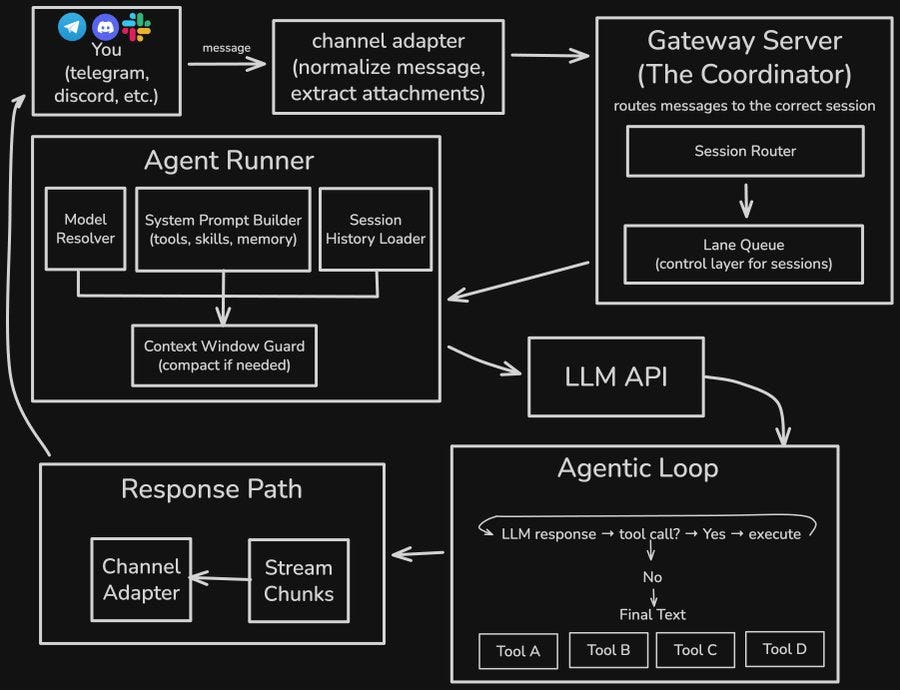

We Just Got a Peek at How Crazy a World With AI Agents May Be

Most current mass-market AI agents, such as OpenAI’s Codex or Anthropic’s Cowork, are pretty timid. Users are encouraged to be careful about what tools they allow the agent to use. The agents ask permission before doing anything potentially dangerous – often, to the point of exasperation. They’re generally designed to only act in response to explicit user instructions.

OpenClaw takes the opposite, YOLO, approach. It has free access to the computer where it’s installed. It can browse the web. You’re encouraged to give it access to your email, calendar, and other data. It doesn’t wait to be spoken to; it can initiate new actions at any time, based on its interpretation of its instructions. A complex architecture allows it to initiate actions, form new memories, communicate via chat and social media, and even teach itself new skills.

What a fun idea, but it is also a bit scary, don’t you think?