Five Things AI: Hyperscalerai, Aipivot, Aicode, Worldai, Demonai

There is so much AI out there! Find out what it does!

Heya and welcome back to Five Things AI!

Hyperscalers just built an entirely new internet you cannot see, optimized for GPU-to-GPU traffic that never touches the public network, controlling 71% of global subsea fiber capacity and adding 120,000 new fiber miles per year while Europe has zero comparable infrastructure. Meanwhile AI companies quietly pivoted their messaging from “we’re replacing humanity” to “we’re just normal technology like steam engines” because shouting about eliminating labor eventually gets you rakes, pitchforks, and nationalization. The hot debate this week is whether Claude Code is making products worse (the purists say codebases are liabilities you should minimize) or making products possible (my view: agentic code lets small teams build what previously required dozens of engineers), while researchers are discovering that LLMs collapse to zero performance the moment you require temporal interactive reasoning, which is why world models trained on video and simulation might be the path forward since text alone cannot teach physics. And the weirdest story: AI systems have “demons” (the technical term is attractors), stable self-reinforcing behavioral states that resist suppression and spread into unrelated contexts, which sounds like harmless goblins until you realize this is a fundamental structural feature of how these systems work. So we have invisible infrastructure consolidation, narrative management, a religious war over code quality, the limits of language models becoming clear, and emergent behaviors we cannot fully control. May you live in interesting times indeed.

Enjoy this edition of Five Things AI!

Don’t forget to check out GRID!

Say Hello to the Internet of AI

The skepticism, however, cannot contradict the data on hyperscaler buildouts and the sheer amount of money going to work. As I started to dig in, what emerged was a completely new internet (or network of networks) optimized entirely for AI. I call it the Internet of AI.

Let me walk you through the layers of this internet of AI, and how it is actually being built, from the inside out. A lot of this will seem familiar, because we started this journey back in 2005 with the launch of Amazon’s S3 service. It has been a relentless march that has gone into mach speed in recent times.

This is a huge shift.

AI’s big messaging pivot

From a public relations perspective, this pitch is WORLDS better than the previous one. Shouting about replacing humanity might play well with corporate customers and investors salivating over the dream of eliminating labor costs, but eventually you get the rakes and pitchforks, followed by some form of nationalization. Describing AI as a normal technology — a successor to the steam engine and the automobile and the computer — is much smarter politics.

The question is: Is it just politics and PR? Certainly there are plenty of AI researchers and entrepreneurs who will keep quietly believing that AGI is going to make humans obsolete; they’ve heard (and repeated) this line for too many years to suddenly believe something else overnight.

While I do see new jobs getting created, many jobs will disappear, mostly those where humans are doing repititve jobs machine can do faster, cheaper and better.

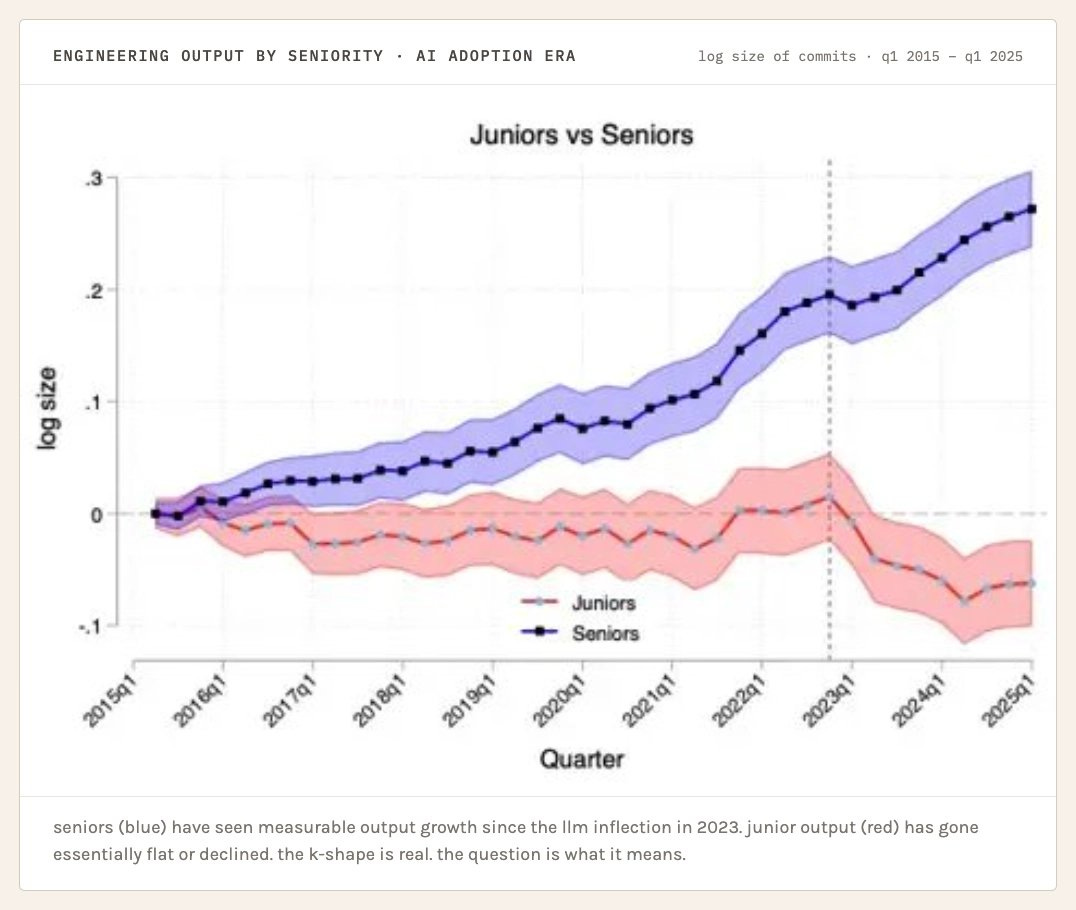

claude code is not making your product better

here’s the mental model that most people writing about this space are missing. and i say this as someone who wrote recently about how the token economics of this whole ecosystem don’t add up — the problem isn’t just financial, it’s conceptual. we’ve misunderstood what software engineering is actually optimizing for.

the best engineering cultures treat lines of code as something you spend, not something you produce. you spend them on the features that matter. you refuse to spend them on the features that don’t. the codebase is a liability on your balance sheet, not an asset.

My perspective is different: agentic code is making my products possible. And the next wave of agentic coding will make it also better.

World Models Can Change Everything

AI keeps catching up on static benchmarks through brute-force scaling, but the moment you require the kind of temporal, interactive reasoning that humans do effortlessly… performance collapses right back to zero. Guess what the real, physical world is made up of? Temporal, interactive reasoning that is infinitely more complex than a simplistic 2D game!

This is the intellectual foundation for world models. If LLMs can’t learn physics from text, maybe models trained on video and simulation—models that actually predict what happens in the physical world—can bridge that gap. That’s the idea, anyway. Let’s talk about why it’s so hard… because this isn’t the first time we’ve tried this approach.

Fascinating stuff - and this all happens while we try to grasp how LLMs work.

All the demons hiding in your AIs… ranked!

As with so many of the strange things that LLMs do, there are different ways of looking at these phenomena. Most people will laugh this stuff off as weird marginalia, fun to share with friends and on social media, but not substantially different from those videos of dogs singing along with their owners. The interpretive frame here is “hey look, I bet you didn’t know these creatures could do this!?”.

But really, this is less about goblins as goblins than what the goblins exemplify. They are a (somewhat) charming, probably harmless instance of something that turns out to be a fundamental structural feature of how these systems work: the emergence of stable, self-reinforcing behavioural states that models converge toward under certain conditions. More than that, these are states that resist suppression and that sometimes spread into contexts far removed from the ones that produced them.

The technical term, borrowed from dynamical systems theory, is an attractor. Another, more folk term might be demon, or monster.

May you live in interesting times. — Chinese proverb.