Five Things AI: Circular Deals, Powerful AI, Books, Agentic Coding, Cheating

Everything you need to know about AI at the beginning of 2026. Really.

Heya and welcome back to Five Things AI!

So, what happened since you read last week’s Five Things AI? Just as much as every week, I guess. The big AI story this week is DeepSeek-R1 continuing its momentum after topping the US iOS App Store charts by January 27th, even without the huge OpenAI-scale budgets. Google’s Gemini 3 rollout is hitting full stride, shipping the most intelligent model they’ve ever built directly into Search, the Gemini app, and their new Antigravity agentic platform—notable for its state-of-the-art reasoning and multimodal understanding, plus a new “Deep Think” mode for enhanced performance. I have been using Gemini a lot in the last few months and I quite like it, it is a total workhorse, but not as creative, empathetic and fun as Claude. Speaking of which, Anthropic landed a government contract to build AI assistant pilots for state services, and on related news: Apple confirmed it’s striking a deal with Google to bring Gemini to a revamped Siri, choosing Mountain View over OpenAI in what Bloomberg called “a major win for Alphabet” as Google’s market cap hit $4 trillion.

Behind the headlines, the infrastructure race is intensifying: OpenAI’s $10 billion compute deal with Cerebras, Kimi’s K2.5 multimodal model with swarm-based agentic orchestration,, and so on. The pattern is clear: AI’s moving from model benchmarks to deployment at scale, with regulated sectors like healthcare and government finally opening up, and the old walls between consumer apps, enterprise infrastructure, and specialized domains crumbling fast. Also, OpenAI just released Prism, an AI-native workspace for scientists that produces LaTeX to make scientific papers look great.

Oh, and I probably missed a ton of huge AI-related news. But I picked five great articles for you to read!

Enjoy this edition of Five Things AI!

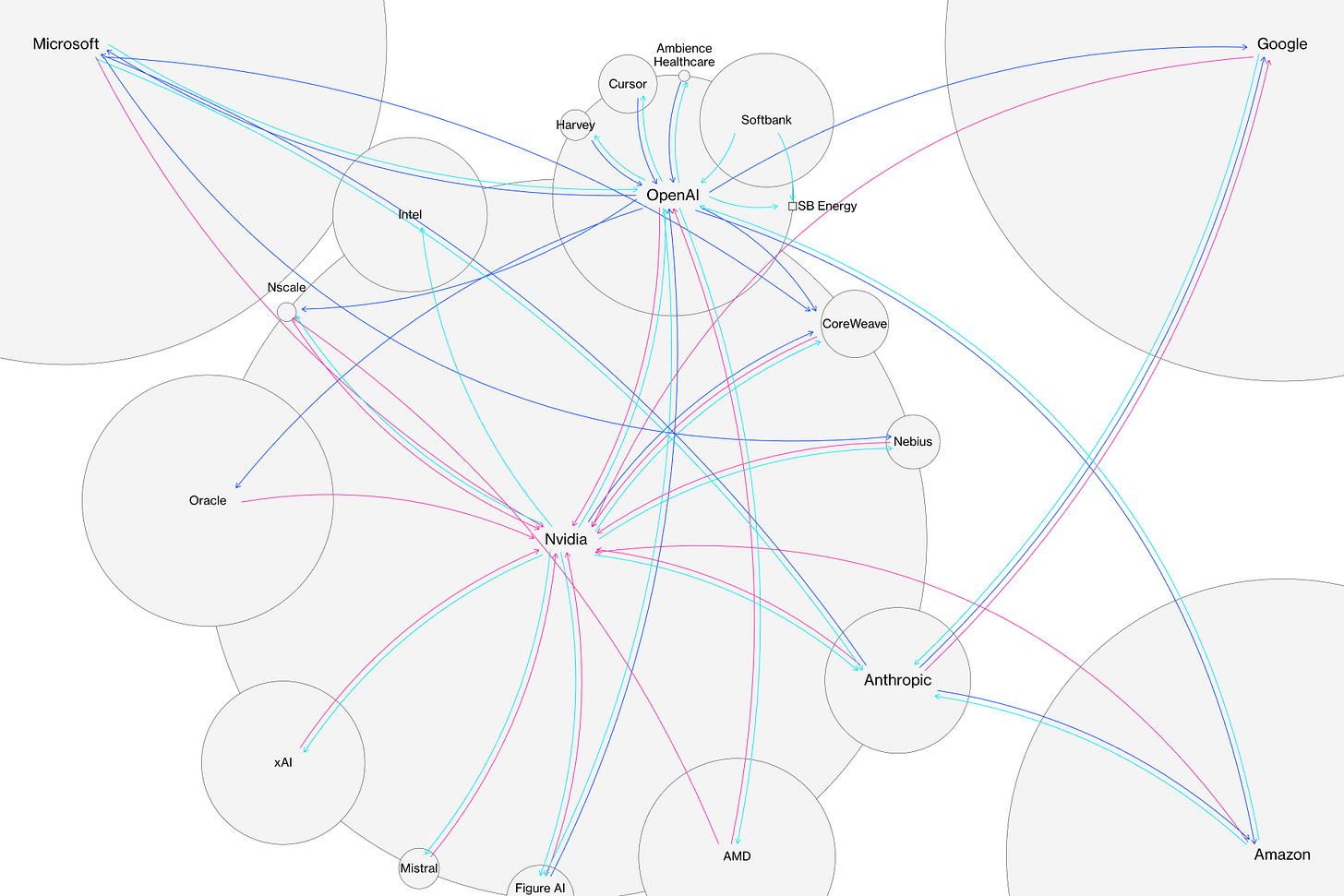

A Guide to the Circular Deals Underpinning the AI Boom

Circular deals don’t just increase the potential damage to the companies in a market downturn. They can also skew the balance of incentives to encourage bad decision making. A company that has one of its suppliers as a major shareholder may be more likely to keep buying its stuff whether or not it makes commercial sense. That increases the risk of money being spent to secure business that fails to materialize. The circularity can be most risky when a handful of buyers are responsible for a large share of the market — as is the case with AI.

House of cards could be another description of what’s going on. It could all work out, but it is a risky game that pushes up valutions everywhere in AI.

The Adolescence of Technology

We are now at the point where AI models are beginning to make progress in solving unsolved mathematical problems, and are good enough at coding that some of the strongest engineers I’ve ever met are now handing over almost all their coding to AI. Three years ago, AI struggled with elementary school arithmetic problems and was barely capable of writing a single line of code. Similar rates of improvement are occurring across biological science, finance, physics, and a variety of agentic tasks. If the exponential continues—which is not certain, but now has a decade-long track record supporting it—then it cannot possibly be more than a few years before AI is better than humans at essentially everything.

In fact, that picture probably underestimates the likely rate of progress. Because AI is now writing much of the code at Anthropic, it is already substantially accelerating the rate of our progress in building the next generation of AI systems. This feedback loop is gathering steam month by month, and may be only 1–2 years away from a point where the current generation of AI autonomously builds the next. This loop has already started, and will accelerate rapidly in the coming months and years. Watching the last 5 years of progress from within Anthropic, and looking at how even the next few months of models are shaping up, I can feel the pace of progress, and the clock ticking down.

It is always a pleasure to read Dario Amodei’s articles and this is no exception. It is interesting how he manages to work on frontier models at Anthropic and at the same time thinks a lot about possible risks of the LLM development.

Inside an AI start-up’s plan to scan and dispose of millions of books

Within about a year, according to the filings, the company had spent tens of millions of dollars to acquire and slice the spines off millions of books, before scanning their pages to feed more knowledge into the AI models behind products such as its popular chatbot, Claude.

Details of Project Panama, which have not been previously reported, emerged in more than 4,000 pages of documents in a copyright lawsuit brought by book authors against Anthropic, which has been valued by investors at $183 billion. The company agreed to pay $1.5 billion to settle the case in August, but a district judge’s decision last week to unseal a slew of documents in the case more fully revealed Anthropic’s zealous pursuit of books.

Oh, speaking of Anthropic, ingesting millions of books into the LLM is one sure way to push the frontier models even further.

The 80% Problem in Agentic Coding

Someone pointed out the obvious thing I was tiptoeing around: the first 90% might be easy, but the last 10% can take a long time. 90% accuracy is fine for non-mission-critical stuff. For the parts that actually matter, it’s nowhere close. Self-driving cars work great until they don’t, and that’s why L2 is everywhere but L4 is still mostly vaporware.

For non-engineers, the wall is lower but still real. Tools like AI Studio, v0 and Bolt can turn sketches into working prototypes instantly. But hardening that prototype for production - handling real user data at scale, ensuring security and compliance - still requires engineering fundamentals. AI gets you 80% to an MVP; the last 20% requires patience, learning deeply or hiring engineers.

I can totally relate to this - the coding is fun and quick, putting everything together and actually making it work is a whole different level.

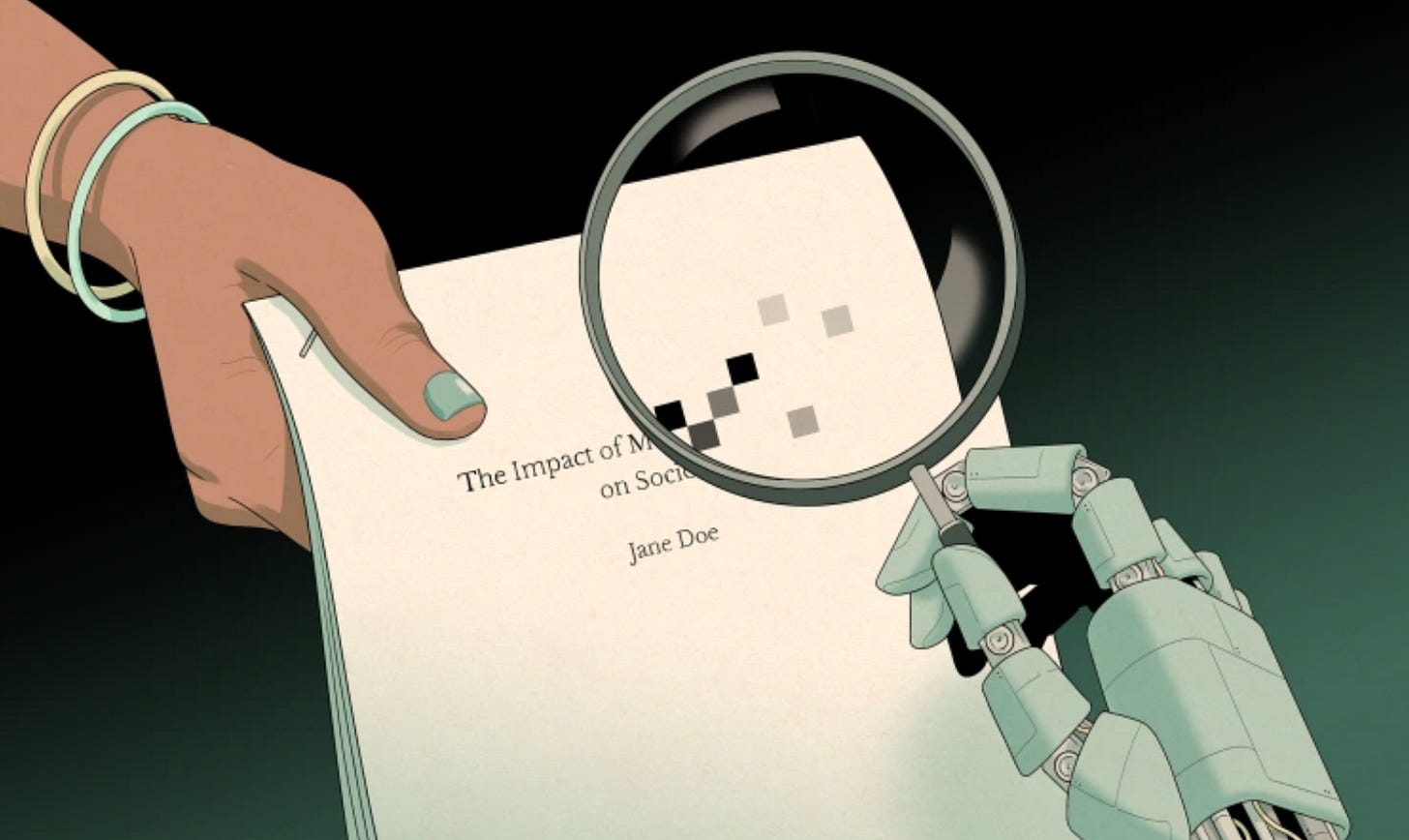

To avoid accusations of AI cheating, college students are turning to AI

Amid accusations of AI cheating, some students are turning to a new group of generative AI tools called “humanizers.” The tools scan essays and suggest ways to alter text so they aren’t read as having been created by AI. Some are free, while others cost around $20 a month.

Some users of the humanizer tools rely on them to avoid detection of cheating, while others say they don’t use AI at all in their work, but want to ensure they aren’t falsely accused of AI-use by AI-detector programs.

That is just wild. But I had the same discussion with our oldest daughter who was afraid that some AI detector would go off even though she wrote everything herself. Fascinating, that AI is the solution to a problem that was caused by AI…