Five Things AI: Anthropic, Claude, Mythos, OpenAI, Stargate

There is so much AI out there! Find out what it does!

Heya and welcome to Five Things AI!

Anthropic's Claude Mythos is the coding wunderkind that can spot software bugs faster than a caffeinated intern, but surprise: that same superpower could let any script kiddie or state hacker crack the world's software like piñatas at a bad party. Security just got inverted, forcing every dev team to burn tokens on hardening instead of shipping half-baked features, while Tom Tunguz gloats about the pricing power for those who can afford it. Meanwhile, OpenAI's Altman drama reeks of power trips clashing with nonprofit purity tests, and their Stargate UK bail just proves the hype machine prefers safe bets over actual sovereign compute dreams. Good luck sleeping tonight, Big Tech. And how about the EU waking up finally?

Enjoy this edition of Five Things AI! And don’t forget to check out GRID!

Anthropic’s Restraint Is a Terrifying Warning Sign

The good news is that Anthropic discovered in the process of developing Claude Mythos that the A.I. could not only write software code more easily and with greater complexity than any model currently available, but as a byproduct of that capability, it could also find vulnerabilities in virtually all of the world’s most popular software systems more easily than before.

The bad news is that if this tool falls into the hands of bad actors, they could hack pretty much every major software system in the world, including all those made by the companies in the consortium.

This is really a concerning development. I do think Anthropic tries to handle it the right way by being very careful about the release.

Claude Mythos Is Everyone’s Problem

For years, cybersecurity experts have been warning about the chaos that highly capable hacking bots could usher in. As a result of how capable AI models have become at coding, they have also become extremely good at finding vulnerabilities in all manner of software. Even before Mythos Preview, AI companies such as Anthropic, OpenAI, and Google all reported instances of their AI models being used in sophisticated cyberattacks by both criminal and state-backed groups. As Giovanni Vigna, who directs a federal research institute dedicated to AI-orchestrated cyberthreats, told me last fall: You can have a million hackers at your fingertips “with the push of a button.”

Still, Mythos Preview appears to represent not an incremental change but the beginning of a paradigm shift. Until recently, the biggest advantage of AI-assisted hacking was not ingenuity, per se, so much as speed and scale. These bots could be as good as many human cybersecurity experts, but not necessarily better—rather, having an army of 1 million virtual, tireless hackers allows you to launch more attacks against more targets than ever before.

This is a scary look at the future.

Emerging from the Mythos

Security posture inverts. Any system not protected by this level of analysis is now porous by default. Bugs that hid for decades surface in hours, but only for those with the tools to find them.

Pricing power shifts. This is no longer about margin on resold GPU hours. How much is it worth to secure your software against vulnerabilities no conventional tool can find? How much is it worth to be able to build at the new standard of enterprise grade?

Engineering budgets redirect. A significant fraction of AI tokens spent on software development will shift to hardening. Every company shipping code will need to scan it at this level of sophistication. Buyers will start to demand this level of hardening.

I haven’t thought about it this way. So Agentic AI will all be about cybersecurity in the future, or at least 99%.

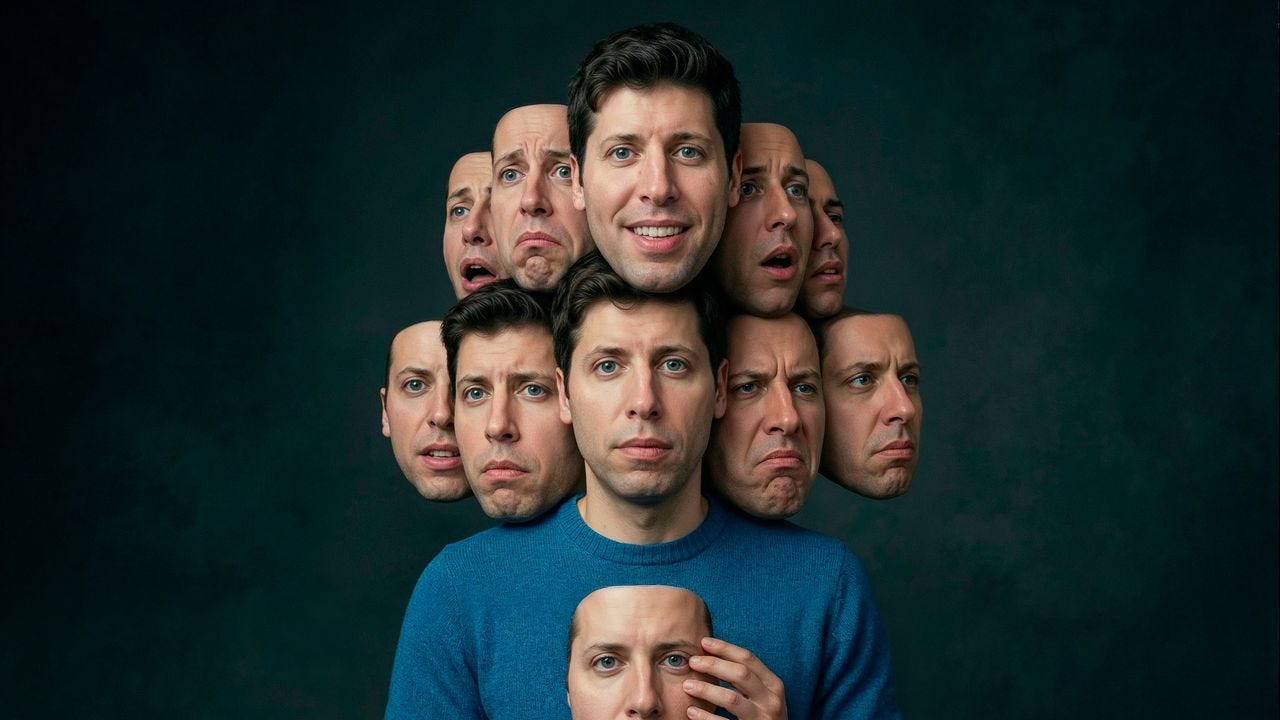

Sam Altman May Control Our Future—Can He Be Trusted?

Many technology companies issue vague proclamations about improving the world, then go about maximizing revenue. But the founding premise of OpenAI was that it would have to be different. The founders, who included Altman, Sutskever, Brockman, and Elon Musk, asserted that artificial intelligence could be the most powerful, and potentially dangerous, invention in human history, and that perhaps, given the existential risk, an unusual corporate structure would be required. The firm was established as a nonprofit, whose board had a duty to prioritize the safety of humanity over the company’s success, or even its survival. The C.E.O. had to be a person of uncommon integrity. According to Sutskever, “any person working to build this civilization-altering technology bears a heavy burden and is taking on unprecedented responsibility.” But “the people who end up in these kinds of positions are often a certain kind of person, someone who is interested in power, a politician, someone who likes it.” In one of the memos, he seemed concerned with entrusting the technology to someone who “just tells people what they want to hear.” If OpenAI’s C.E.O. turned out not to be reliable, the board, which had six members, was empowered to fire him. Some members, including Helen Toner, an A.I.-policy expert, and Tasha McCauley, an entrepreneur, received the memos as a confirmation of what they had already come to believe: Altman’s role entrusted him with the future of humanity, but he could not be trusted.

Sam Altman is still far away from the supervillain status Elon Musk has achieved, but I think he is not on the right path anymore.

OpenAI shelves Stargate UK in blow to Britain’s AI ambitions

The Stargate project was to support Britain in building out “sovereign compute” – infrastructure that would allow the government and other UK institutions to run AI models on datacentres in the country. That is, in theory, crucial to the security of British data.

Now, OpenAI has apparently put it on pause, saying it would wait for “the right conditions” to enable “long-term infrastructure investment”.

I guess this is part of the newly found focus that OpenAI wants to have pre-IPO. But it was great marketing while it lasted.