Five Things AI: AI Safety, Productivity Panic, Software Industry, Agentic AI, Searching Agents

Everything you need to know about AI at the beginning of 2026. Really.

Heya and welcome back to Five Things AI!

In the good old style of eat your own dogfood, I built an Agentic AI platform. It is catered towards startup founders from Europe and Israel. I had the idea around Christmas and have been pounding Google Antigravity ever since. As a former CTO, who hasn’t been building digital products for the 20 years, this is such a wonderful feeling to build again. And this time I am annoying nobody by saying “oh, you know what, I have an idea, let’s build this now!” - being fully aware that this might lead to massive refactoring.

Please test out GRID - I think it is a really valuable resource for startup founders. We have more than 10.000 Venture Capital firms in our database and more than 30.000 VC professionals with their investment focus. I believe this will lead to better fundraising experiences for the founders. Also, they can get their DIALECTIC score to figure out how VC will look at their deck when making an investment decision.

Enjoy this edition of Five Things AI! And don’t forget to check out GRID!

What the Anthropic AI safety saga is really all about

Now, the biggest near-term consequence for Anthropic is likely how clients and potential customers value and trust the company, said Owen Daniels, associate director of analysis at Georgetown’s Center for Security and Emerging Technology.

Anthropic said its self-imposed safety measures were always meant to be flexible and subject to change as AI evolves. It pledged to be transparent about safety in the future and said it really didn’t have a choice: If it stopped growing, rivals that don’t value safety as much could push ahead and make AI “less safe” overall.

This topic is really interesting, because thus far Anthropic has maintained a position that is pretty unique among the frontier model companies. They have put a lot of thinking into AI safety and felt like the good guys. This could change now.

Claude Code and the Great Productivity Panic of 2026

Far from freeing up engineers for a life of leisure, increasingly capable AI coding agents—including Anthropic PBC’s Claude Code and OpenAI’s Codex—have over the past few months created a kind of productivity paranoia among executives and, by extension, the people who work for them. These agents do more than generate text or images, as consumer-facing chatbots do. Instead they plan, execute and complete tasks on behalf of their human users, even creating their own agents to do the work for them. That may mean building and debugging an app, scheduling a meeting or buying a pair of pants, all with minimal human oversight. The fact that AI agents can produce more code than mere humans in less time has morphed into a sense that they therefore must. As OpenAI’s president, Greg Brockman, recently put it on X, it “feels like such a wasted opportunity every moment your agents aren’t running.”

Yes, coding agents are absolutely changing everything. Software on Demand is such a paradigm-shift, it is so hard to understand what all of this will imply.

The Software Industry Will Survive AI

AI is probabilistic technology, which means it’s slightly unpredictable. Underneath the surface, it is constantly guessing. This is an immutable characteristic of large language models. It explains why AI hallucinates and makes other mistakes. Many applications require total reliability, so AI needs a deterministic software layer to help with direction and guardrails. Code is becoming cheap, but mistakes aren’t, and AI isn’t reliable enough to act entirely on its own.

In one crucial way, AI-generated code will be less valuable than open source. AI code will be purpose-built, unique to the organization that wrote it, unsupported by any community or organization. If the software makes mistakes, the user will have nowhere to turn for advice or assistance.

This proves my argument that I have been telling anyone who would want to listen: the large companies will be hit hardest by AI, because they cannot change as quickly as the smaller companies, that will then get extra capabilities that will give them so much more leverage than before.

Agentic AI, explained

While there isn’t a universally agreed upon definition of agentic AI, there are broad characteristics associated with it. While generative AI automates the creation of complex text, images, and video based on human language interaction, AI agents go further, acting and making decisions in a way a human might, said MIT Sloan associate professor

In a research paper exploring the economic implications of agents and AI-mediated transactions, Horton and his co-authors focus on a particular class of AI agents: “autonomous software systems that perceive, reason, and act in digital environments to achieve goals on behalf of human principals, with capabilities for tool use, economic transactions, and strategic interaction.” AI agents can employ standard building blocks, such as APIs, to communicate with other agents and humans, receive and send money, and access and interact with the internet, the researchers write.

I have been working with Agentic AI for about 1 1/2 years now and it is still hard to grasp what this can do.

Agents are not thinking, they are searching

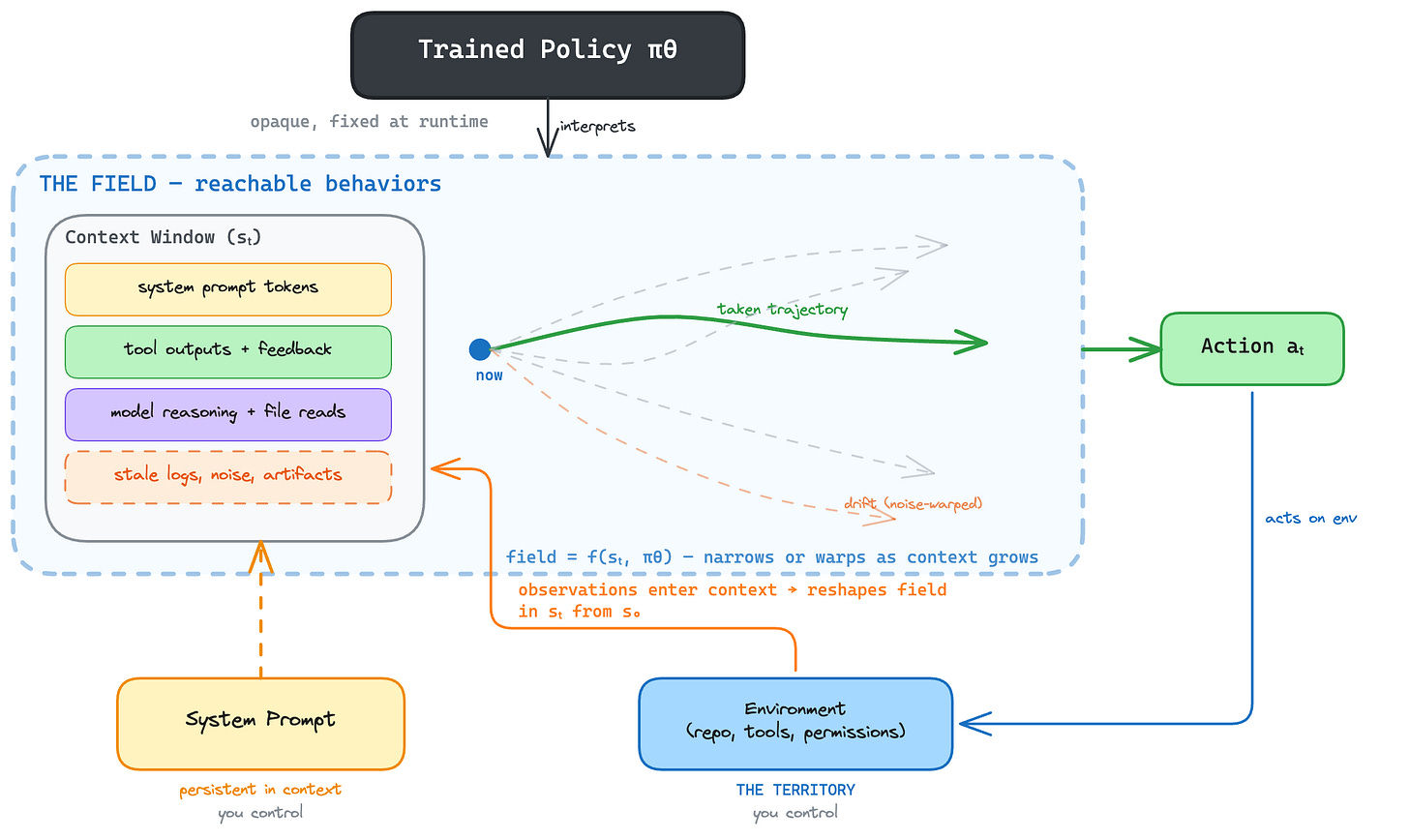

My thesis is that these models are searching toward a reward signal, and your environment bounds that search. Framing it as “thinking” is noise. These models spit out slop even if they think their way to oblivion. When search is the mental model, the design questions change. You stop asking “did I give it good enough instructions?” and start asking “did I bound the space tightly enough that the search converges?” This essay is as much computer science and philosophy as it is software engineering.

To understand this framing, we first need to understand what goes into creating these agents, ie. pre-training and reinforcement learning. The mathematical properties of pre-training and RL help us infer how this joint interplay will work in practice. Using this better inferred scheme we can change the way we design agentic software and get better outcomes from it. Finally, I will discuss some of the consequences that come with this easy access to create cheap software.

I love a well-argued counterpoint.